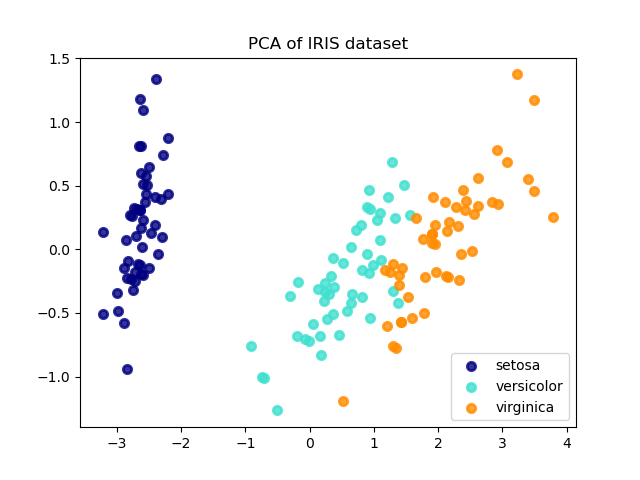

Since I use sparse representation and dictionary concept then reducing the dimension of $d$ (observation pixels for individual classes) is more make sense rather than reducing the number of features ( $B$). But my question is should I reduce the number of training pixels(observation=d$$) or reduce the variable dimension ($B$)? I have implemented the PCA in Octave and project my data on that particular low dimension. However, my goal is to apply PCA on hyperspectral satellite imagery like this. Therefore I want to apply PCA to individual sub-dictionary in order to form the main dictionary. Now I am trying to construct the $D$ by this mean that the classes (sub-dictionaries) are well separated. So consider $D$ as a dictionary with $d\times B$ dimension where $d=3000$ is the number of samples and $B=200$ is the number of band/channel. The default options perform principal component analysis on the demeaned, unit variance version of data.

My goal is to classify a hyperspectral image using sparse representation by the linear combination concept which is as follow: If the ‘svd’ method is selected, this flag is used to set the parameter ‘fullmatrices’ in the singular value decomposition method.

#PCA METHOD FOR HYPERIMAGE MOVIE#

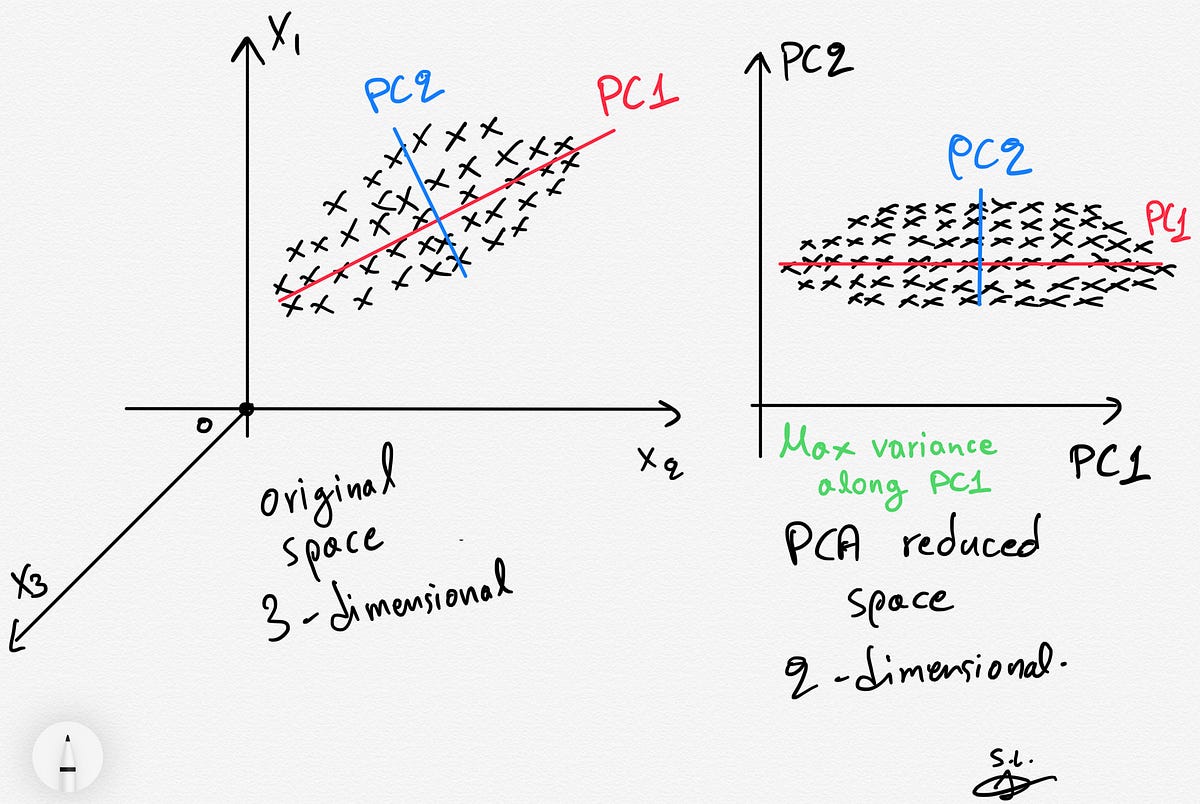

In this project, we try to develop an algorithm which can automatically create aĭataset for abstractive automatic summarization for bigger narrative text bodies suchĪs movie scripts.I have been studying the concept of PCA and its implementation for dimensionality reduction for more than 1 month. Therefore, creating datasets from larger texts can help improve automatic summarization. The bigger theĭifference in size between the summary and the original text, the harder the problem willīe since important information will be sparser and identifying them can be more difficult. Papers, which are somewhat small texts with simple and clear structure. Most researchĪbout automatic summarization revolves around summarizing news articles or scientific ItĬan be used to create the data needed to train different language models. %X Automatic text alignment is an important problem in natural language processing. %T AligNarr: Aligning Narratives of Different Length for Movie Summarization : International Max Planck Research School, MPI for Informatics, Max Planck Societyĭatabases and Information Systems, MPI for Informatics, Max Planck Society %+ Databases and Information Systems, MPI for Informatics, Max Planck Society Intrinsic evaluation shows the superior size and quality of the Ascent KB, and an extrinsic evaluation for QA-support tasks underlines the benefits of = , Ascent combines open information extraction with judicious cleaning using language models. The latter are important to express temporal and spatial validity of assertions and further qualifiers. Ascent goes beyond triples by capturing composite concepts with subgroups and aspects, and by refining assertions with semantic facets. a cube or hyper-cube of numbers, also informally referred to as a 'data tensor'. MPCA is employed in the analysis of n-way arrays, i.e. If your learning algorithm is too slow because the input dimension is too high, then using PCA to speed it up can be a reasonable choice. One option is called Multilinear principal component analysis: Multilinear principal component analysis (MPCA) is a multilinear extension of principal component analysis (PCA).

Reducing the number of variables of a data set naturally comes at the expense of. This thesis presents a methodology, called Ascent, to automatically build a large-scale knowledge base (KB) of CSK assertions, with advanced expressiveness and both better precision and recall than prior works. A more common way of speeding up a machine learning algorithm is by using Principal Component Analysis (PCA). Principal Component Analysis, or PCA, is a dimensionality-reduction method that is often used to reduce the dimensionality of large data sets, by transforming a large set of variables into a smaller one that still contains most of the information in the large set. Also, these projects have either prioritized precision or recall, but hardly reconcile these complementary goals. It is based on computation of low-dimensional representation of a high-dimensional dataset that maximizes the total distribution which is optimal in reconstruction (Ping et al., 2017). Prior works like ConceptNet, TupleKB and others compiled large CSK collections, but are restricted in their expressiveness to subject-predicate-object (SPO) triples with simple concepts for S and monolithic strings for P and O. Principal Component Analysis (PCA) PCA is a common technique for DR and finding pattern in high dimension data, Proposed by Pearson in 1901. Commonsense knowledge (CSK) about concepts and their properties is useful for AI applications such as robust chatbots.